You need a reliable backup strategy. Consider the recent tech issues plaguing big airlines like Delta and Southwest: According to Motley Fool, outages earlier this summer forced both airlines to cancel thousands of flights and created PR nightmares. What’s more, it seems the backup systems in both cases were either non-existent or weren’t working as intended — imagine the money saved and headaches avoided if networks had simply switched to cloud-based or on-premises backups.

When it comes to failing backups, many businesses assume it “won’t happen to them.” Until it does. Unless you’re storing patient data, there’s likely no requirement for a solid backup plan, no legislation that demands you insure data against disaster. But all it takes is once. One hour without your data. One day without your network. One week without the ability to serve customers.

Bottom line? It’s time for a new backup strategy.

Best Practices

Backup plans require significant time investment to ensure success. Often, this first step causes problems for companies that would rather use short-cut solutions that promise high performance but haven’t been properly tested or optimized. The result? A data backup strategy that cracks under pressure.

To ensure your backup strategy is ready for primetime, start with a plan: What specific information needs to be backed up? Where will it be stored? How often will updates occur, and who’s in charge of monitoring and testing these backups? It’s also important to think outside your local office — what about data stored on employees’ personal computers or in the cloud? If you’re dealing with any personal, financial or health care data, you’re responsible to ensure an auditable chain of ownership and use, even in the event of a disaster.

Next up? Prioritize your data. Not all information needs to be part of an on-demand backup system, since the amount of storage required is prohibitive. Instead, opt for mission-critical files and applications you use day to day, and regularly update your backups to include the most recent versions.

On-Premises Solutions

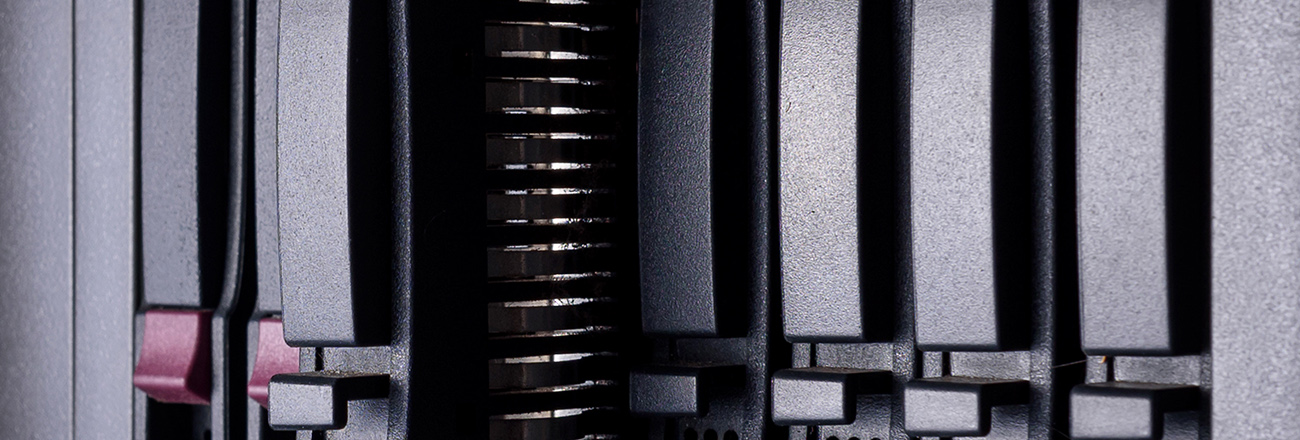

Once you have a basic data-loss prevention plan in place, location is next on your list. If you prefer to keep critical information close to home, consider an on-premises deployment. According to CIO, this could take several forms — including disaster-proof direct attached storage (DAS) or network attached storage (NAS) solutions that can withstand power outages, fires or floods for a certain period of time. Another option? On-site private clouds. This could mean buying hardware and building a disaster-hardened cloud from the ground up or relying on dedicated private cloud providers to supply protected solutions that are protected from disasters that might cripple your network. It’s also possible to store your data entirely offline using magnetic tape, DVDs or even Blu-ray Discs. While this backup method means more time between failure and getting back in action, physical media is unaffected by Internet or power outages.

CIO offers a solid rule of thumb: 2+1. That’s two backup copies maintained on separate physical devices plus one hard-copy media version kept off site. For even better backup protection, try Veeam’s 3-2-1-0 rule — that’s at least three copies of your data and apps stored on two types of media with one stored somewhere else. The zero? Regular testing to make sure your backup plan has no room for error.

Going Cloud

According to Tech Target, meanwhile, many companies are choosing the cloud-computing route to enhance their data backup services. If you’re considering backup as a service (BaaS) solutions, it’s worth understanding how your provider plans to back up critical data. Some layer their own software on top of generic cloud services such as Google, Amazon or Azure, meaning they get the benefit of redundancy but lack the ability to easily control the environment or troubleshoot issues. Other providers leverage their own purpose-built cloud data centers and may allow companies to use their preferred backup hardware; the provider then takes care of maintenance, uptime and backup readiness.

Finally, some cloud providers own their hardware but use third-party software to manage your data during backups. Typically, this software already enjoys broad use and is extremely reliable, but lacks customization options. It’s worth noting that while cloud providers can help mitigate the impact of a disaster by using distance, they are often limited by bandwidth. For example, if your network goes down and you lose data, your provider must be able to quickly send backup information; often, technologies such as block-level incremental backup, deduplication and WAN optimization are used to maximize transfer speed.

Next Steps

New backup options are also emerging. For example, hybrid solutions rely on a combination of on-premises solutions and cloud-based backups to provide the best of both worlds: critical data is immediately supplied by the local backup, while cloud copy data fills the gaps. Another alternative? Disaster recovery as a service (DRaaS). Here, the idea is to deliver cloud backups that exist as a virtual machine (VM) at your provider’s data center. You get the ability to access your data as if it were local, while IT teams work to bring your systems back online. The caveat? If your provider connection fails or doesn’t have the necessary throughput, you’re left without data access.

To protect your data and ensure continued business operation, you need a solid backup strategy. Start with a plan, then identify the best solution for your needs — on-premises, cloud-based, hybrid and on-demand DRaaS are all viable options to help safeguard critical systems.

Updated: January 2019